"Statistics are like swimwear - what they reveal is suggestive but what they conceal is vital."9/19/2017

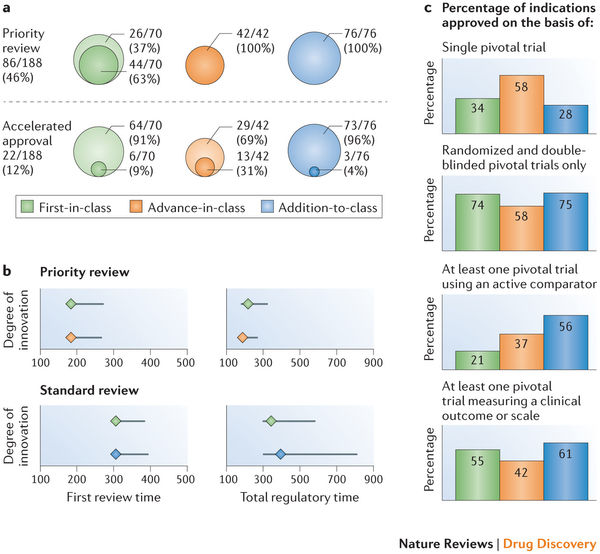

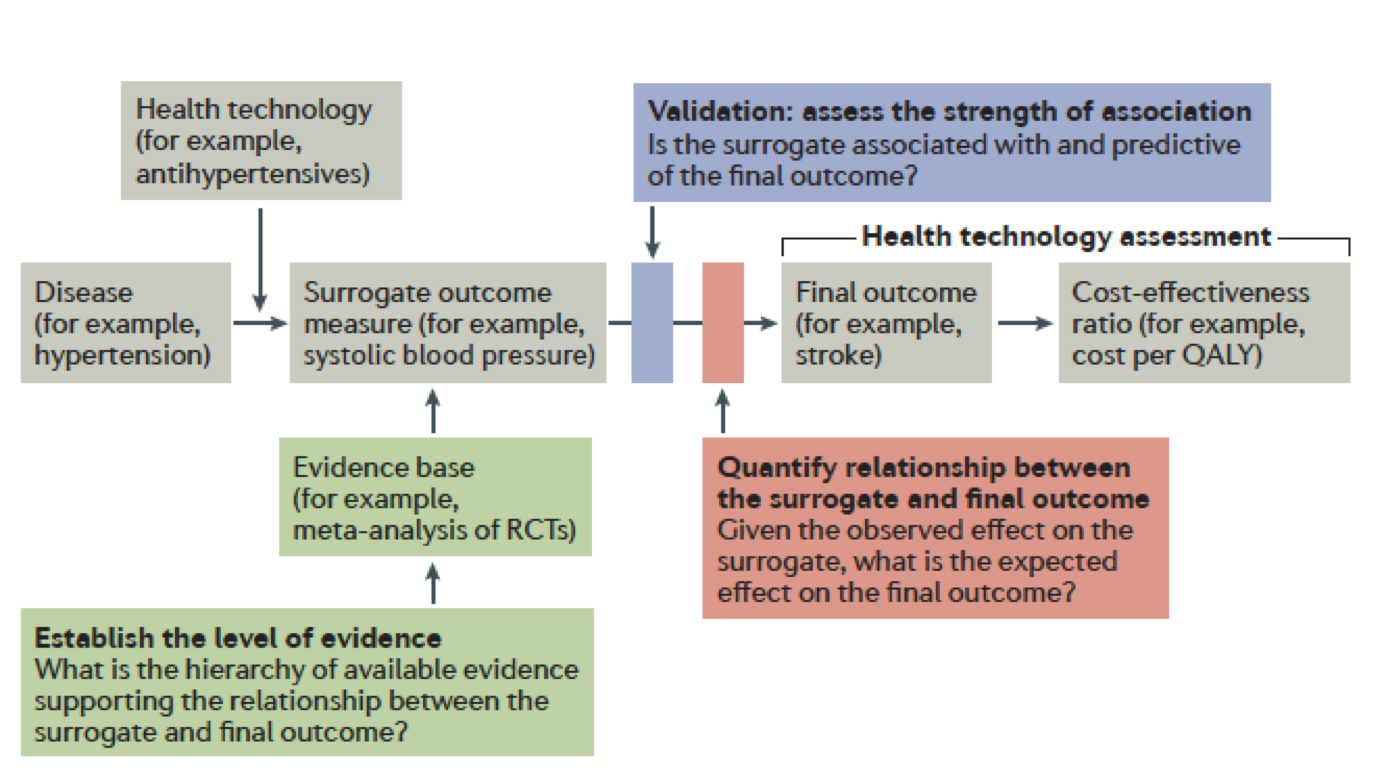

The quote is part of my email signature. It reminds me of the complexity of decisions being made at the point of care by both healthcare providers and patients. "Patients have unprecedented access to health information, but lack the skills to interpret it", says Dr. Mahajan. I would argue that healthcare providers are in the same boat. I often query statisticians at conferences such as the Joint Statistical Meetings--"Who is the intended audience for your models and debates?" At times it seems like complex numeracy is left for those of us perhaps with a high degree of numeracy but often not linked to ideologic debates of statistical context. A big data challenge in consultancy is having to tell a client their "baby is ugly". Data frameworks are created during pre-clinical planning and brand teams often aren't aware of the role they play in positioning or eventual marketing of an intervention or therapeutic. Discussing the limits of the wide-eyed optimism often rampant in drug discovery does not make you a popular member of the discussion. But I would argue, perhaps one of the most valuable. Full disclosure, these are a few of the graphics I have recently used while discussing these topics with groups either bringing a drug to market, understanding the competitive landscape, or guiding stakeholders through what gets reported and what it actually means to their individual perspectives. Links are included for additional granularity. One of the retained channels of direct physician communication remains continued medical education or CME. I continue to argue many are wasting the opportunity by relying on self-serving myopic educational offerings while simultaneously limiting data collection and analyses to the "been there, done that" mentality of whatever hegemonic framework they are promoting (monetizing). The journey to "value-based" care is paved with vague terms like "value" and "innovation". Patients, industry, FDA, and insurance companies for example all have unique tensions around bringing innovative and effective therapies or interventions to patients as quickly as possible. Oncology, in recent months has been the poster child of "hurry up and wait". Trials are trying to move faster with smaller patient populations often identified by pre-determined markers of success dependent on a heterogeneous group of clinical trial endpoints. How do we sift through the data and clinical findings? A compelling argument can be made for developing a framework to validate surrogate endpoints. ...surrogates can result in market access for technologies that turn out to offer no true health benefit — or even cause harm —and can result in overestimation of treatment effects (and economic value), which can lead to inappropriate decisions on coverage. Use of surrogate end points in healthcare policy: a proposal for adoption of a validation framework--Ciani, Buyse, Drummond, Rasi, Saad, and TaylorYou should be left with questions. Lots of questions. •Can absolute standards of surrogacy be defined? •Is association approach sufficient or should surrogacy be further explored using causal inference? •If a surrogate is valid for a specific treatment, is it still valid for other treatments? •Is constancy assumption reasonable (changing treatment landscape)? •Can incomplete surrogate be used e.g. for rescue therapy? The Surrogate Threshold Effect (STE) for EFS as potential surrogate for OS in patients treated for Acute Myeloid Leukemia (AML)--Buyse, Schlenk, Donner, Burzykowski-- JSM 2017  Here is the hot tip for improving critical thought and analytic approaches. First, it matters because the quality of debate and consideration prior to late-stage clinical trials or drug approvals--the better the outcomes for all stakeholders. Public workshops are the best training ground for improving expertise in the validity or weakness in certain data/statistical models. A recent in-person session of Duke-Margolis Center for Health Policy titled Public Workshop: Scientific and Regulatory Considerations for the Analytical Validation of Assays Used in the Qualification of Biomarkers in Biological Matrices focused on discussion of this white paper.

We all have those annoying little quirks that left unchecked can drive us mad. I always look at objectives. The big data projects have them, clinical trials have them (endpoints), medical education, research articles (null hypothesis), they are every where. My stone in the shoe is how poorly formed and ill-defined they are. Regardless of what we call them, often they are not actionable, measurable, temporal or in the case of clinical trials--relevant. Let me explain.

It seems that precise and well defined objectives carefully placed on a path to robust discoveries may be self-limiting. What if the most innovative discoveries aren't waiting to be predicted in advance? I would argue that maybe we need to identify the steps along the way--not knowing where they might lead. For example, perhaps there are populations of cell types or immuno-signatures potentially missed if we don't redefine what success looks like along the way to a singular objective--improving overall survival for example.

If Deep Learning focuses on programming an artificial neural network (ANN) to learn, Neuroevolution focuses on the origin of the overall architecture. We learn about artificial intelligence by comparing it to how the brain works. Our network connections simulate the neurons of the brain. ANN simulates these connections with stronger connections having more nodes or "weight".

What does it mean to make progress in neuroevolution? In general, it involves recognizing a limitation on the complexity of the ANNs that can evolve and then introducing an approach to overcoming that limitation. For example, the fixed-topology algorithms of the '80s and '90s exhibit one glaring limitation that clearly diverges from nature: the ANNs they evolve can never become larger.

If this area interests you or you are working in machine learning and large datasets read about a new class of algorithms called "Illumination algorithms" a modern approach to neuroevolution. The shift is to focus on a broad cross-section of workable variations of what might be possible instead of looking for a single "optimal" solution.

The main reason for integrating neuroevolution into the discussion has to do with the latest advancements in immuno-oncology. If we think of recent phase III failures--perhaps we are too focused on objectives or clinical study endpoints such as overall survival and progression free survival. Defining a broad cross-section of workable variations might be more successful and informative.

Chimeric antigen receptor (CAR)-T cell infusion has demonstrated an increase of many genes in predefined immune signatures, including t-cell related genes, chemokines, checkpoint inhibitors, and lymphocyte-activation protein 3 (LAG3). Maybe there are too many clinical steps along the path for a singularly-defined objective to serve research goals or more importantly--patients. For example, wouldn't it be informative to address the complexity of cancer as a system? We have multiple measures rolled into a clinical trial endpoint that decides whether a investigational product progresses to the next phase, what patient may benefit, and who funds the research. Meanwhile we must consider pre-existing immunity, type and density of immune cells, spatiotemporal dynamics of intratumoral immune cells, cancer vaccines, the role of cytokines, CD122, T cell bispecifics, and a host of other immune factors. Bringing to Life the Science around Innovative New Drugs, Gene and Cell Therapies--noveltargets.com

A straight path never leads anywhere except to the objective.--Andre Gide |

Bonny is a data enthusiast applying curated analysis and visualization to persistent tensions between health policy, economics, and clinical research in oncology.

Archives

November 2020

Categories |